解决:pip install 由于目标计算机积极拒绝,无法连接

本文共 403 字,大约阅读时间需要 1 分钟。

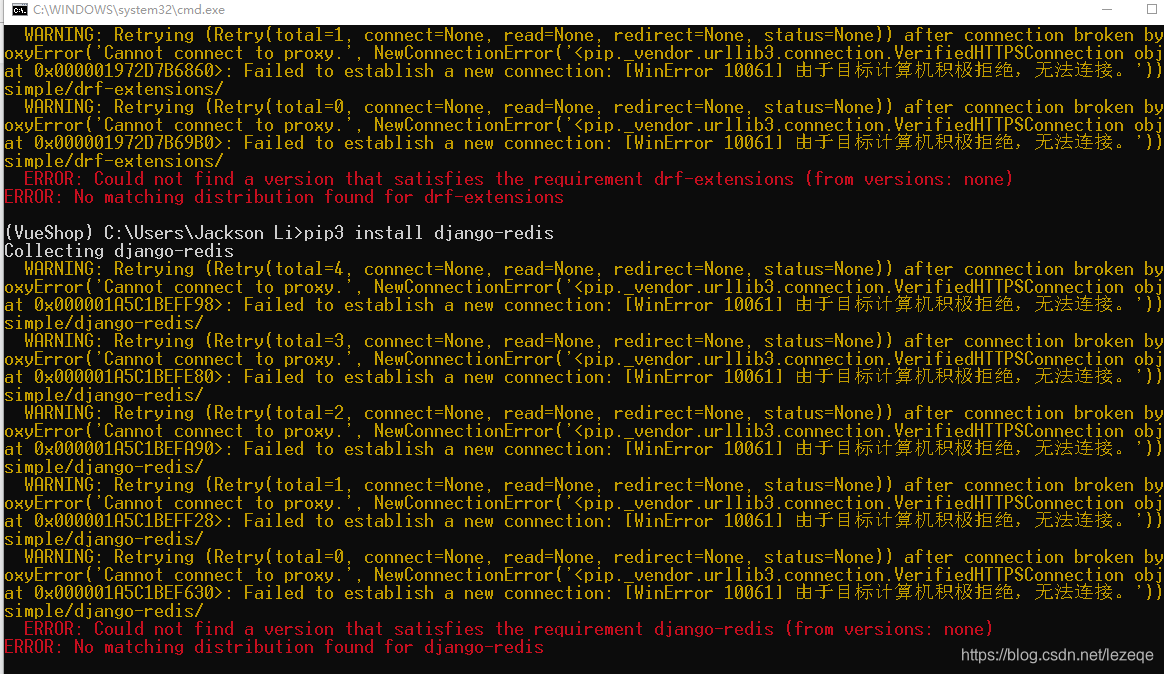

在虚拟环境pip install时,一开始我以为只是第三包的问题,但是后面pip install都这样:

WARNING: Retrying (Retry(total=0, connect=None, read=None, redirect=None, status=None)) after connection broken by 'ProxyError('Cannot connect to proxy.', NewConnectionError(' : Failed to establish a new connection: [WinError 10061] 由于目标计算机积极拒绝,无法连接。')

找到了两篇文章:

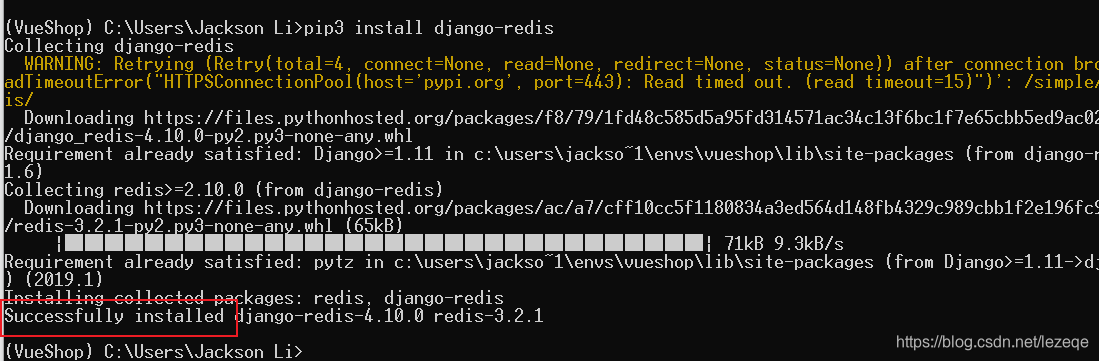

都是讲代理出了问题,解决方法就是删除以下标注的变量:

因为百度出来的解决方案都是说代理问题,并且这个方法也是讲代理,所以就按照上面的方法来。实际上是可行的。

转载地址:http://jaalz.baihongyu.com/

你可能感兴趣的文章

MySQL之SQL语句优化步骤

查看>>

MYSQL之union和order by分析([Err] 1221 - Incorrect usage of UNION and ORDER BY)

查看>>

Mysql之主从复制

查看>>